Inspiration and Story planning

For this project, I wanted to create a 3D music video using Unreal Engine 5 and Adobe Premiere. By creating a music video, there’s opportunities to experiment with different shots, composition methods and vfx. Another interesting aspect I wanted to explore was the editing and post production aspect as editing and creating a seamless story would become a large and vital portion of the video itself.

The song chosen for this piece is called Cutlery, A Japanese Vocaloid song created by ewe which talks about the anxieties and struggles of a one sided relationship. The music video made for the song is presented in 2D and is a generally slow paced which emulates the melancholic tone of the music’s tune. The song is also accompanied with strange but symbolic imagery which fits the character’s relationship falling apart throughout the video.

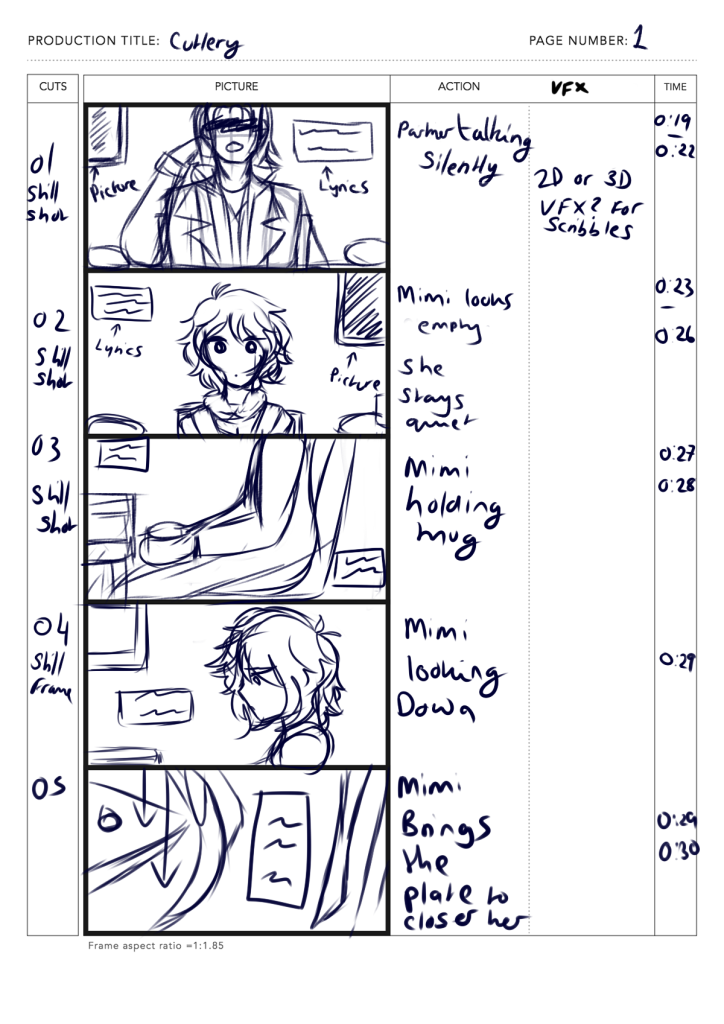

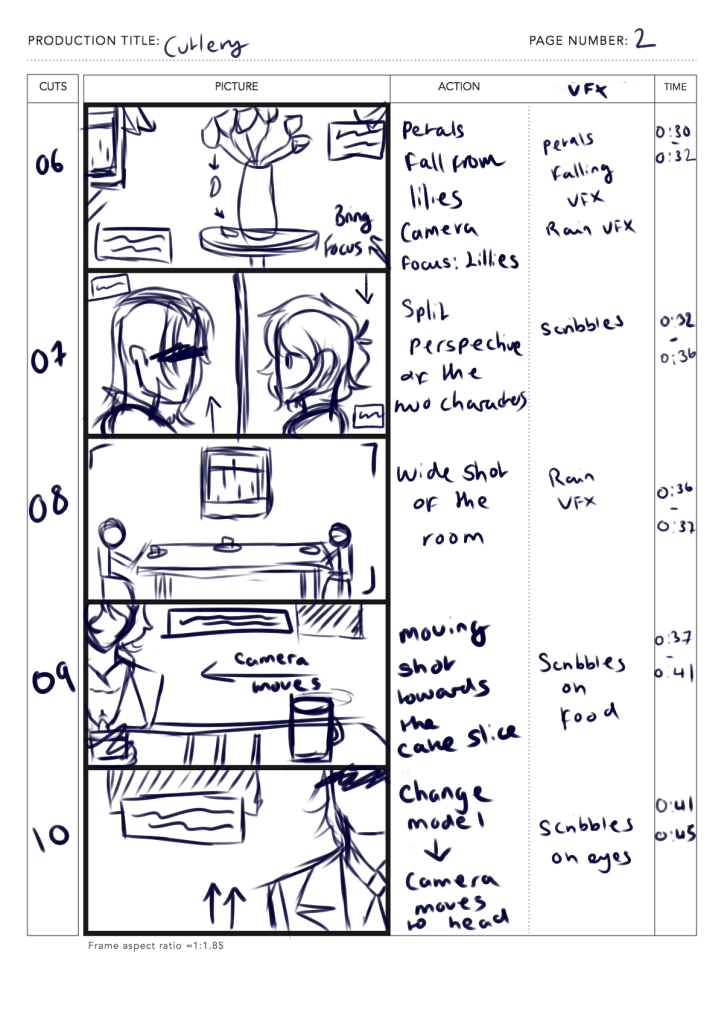

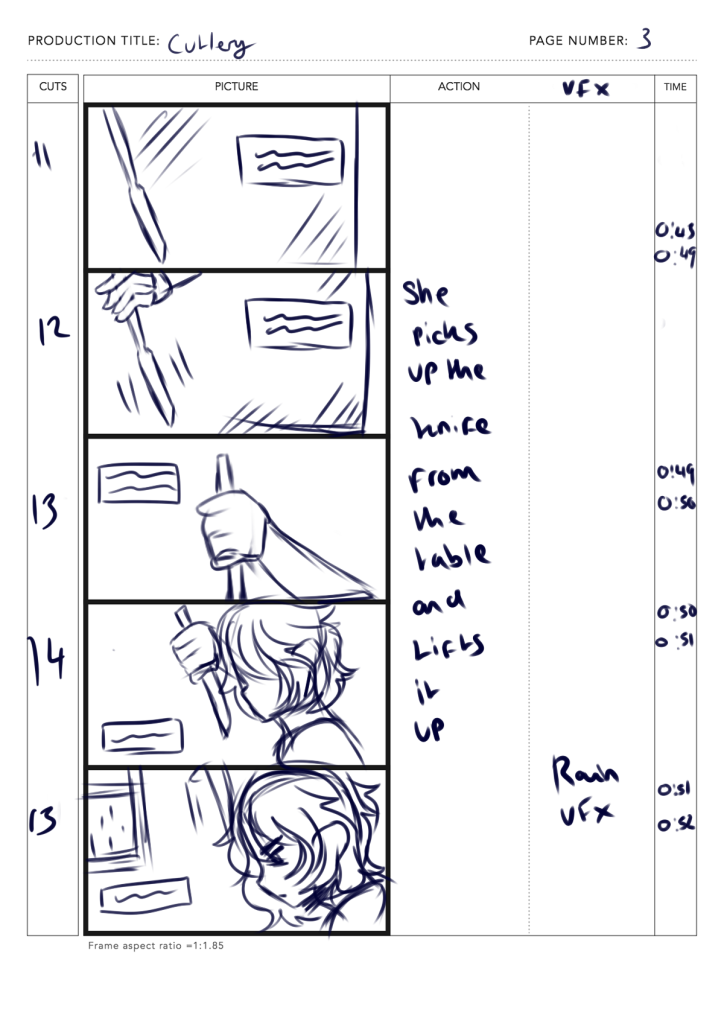

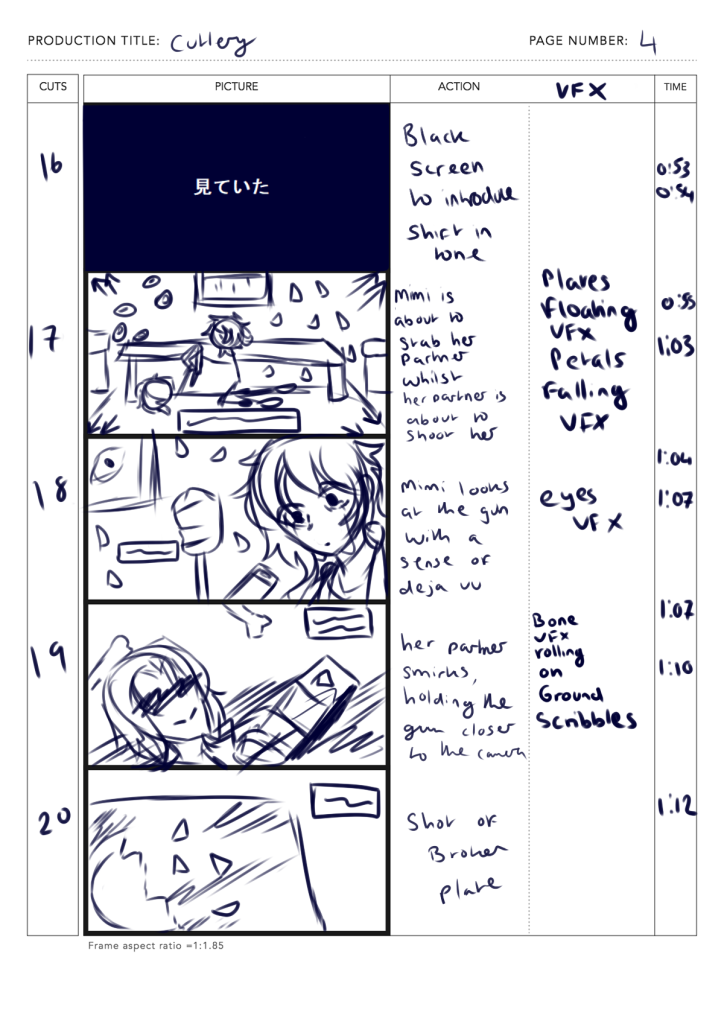

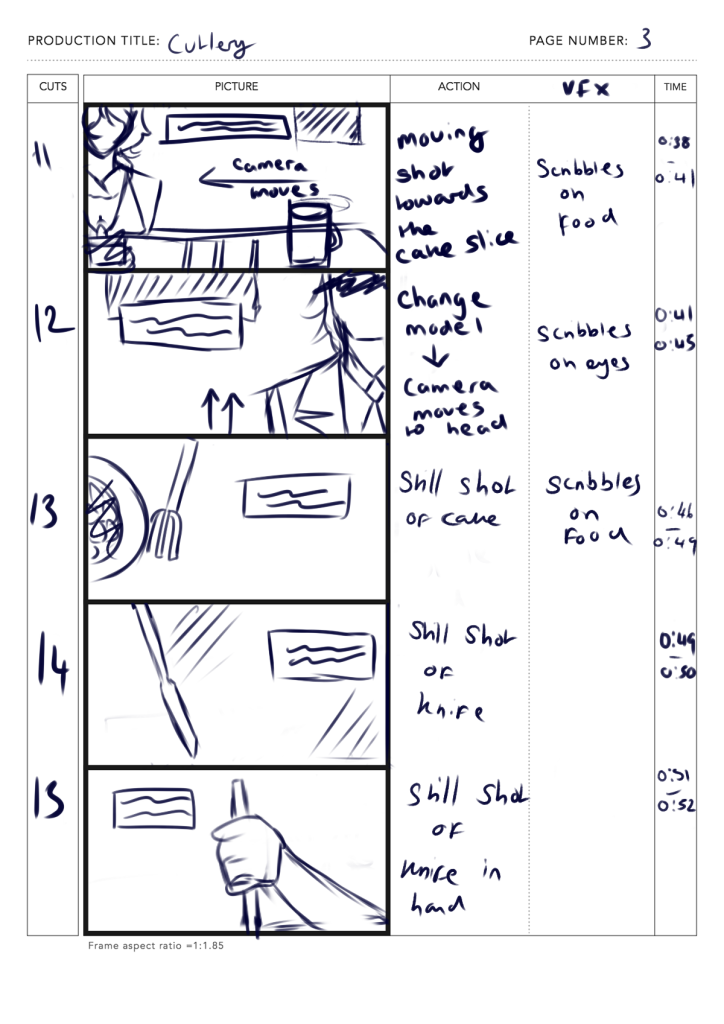

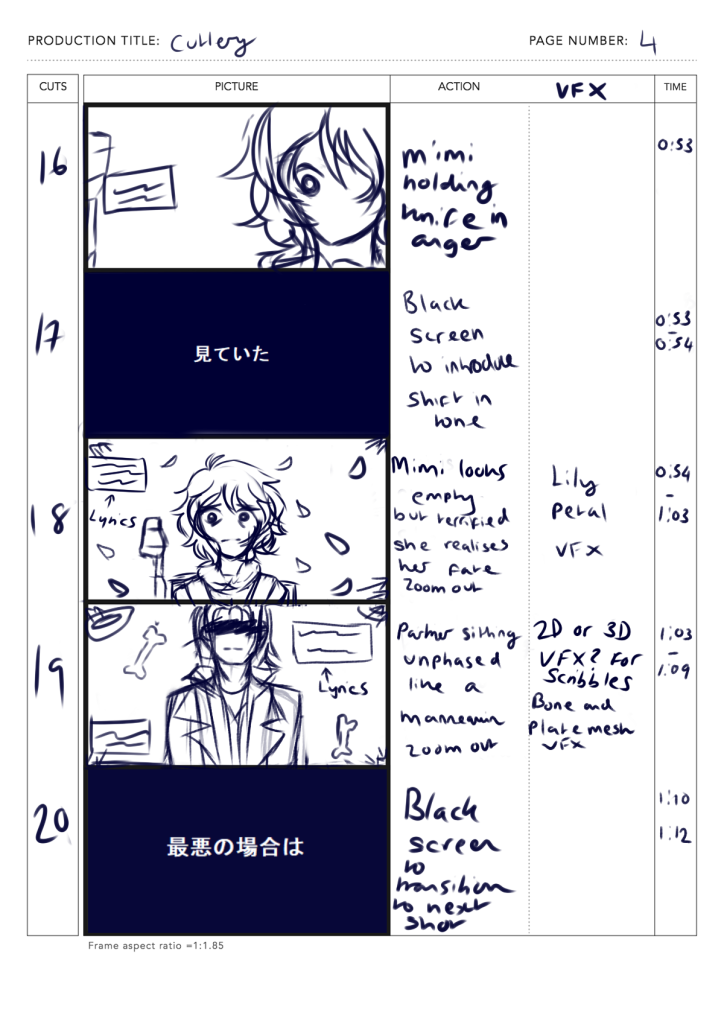

Whilst 60 seconds could only provide a snippet of the song’s full story, by snipping out the second half and just focusing on the initial buildup and main chorus, the song’s narrative remained uninterrupted. With this project, the main goal is to create a different story whilst still maintaining the song’s themes. So the plan started off with creating a rough storyboard to have a better understanding of the story and the song’s beat.

The story logline created for this music video is as follows:

The main character is stuck in purgatory. She has recently been accidentally shot by her husband and now must spend the rest of her time with the same man she hates in a confined space that vaguely resembles their dining room.

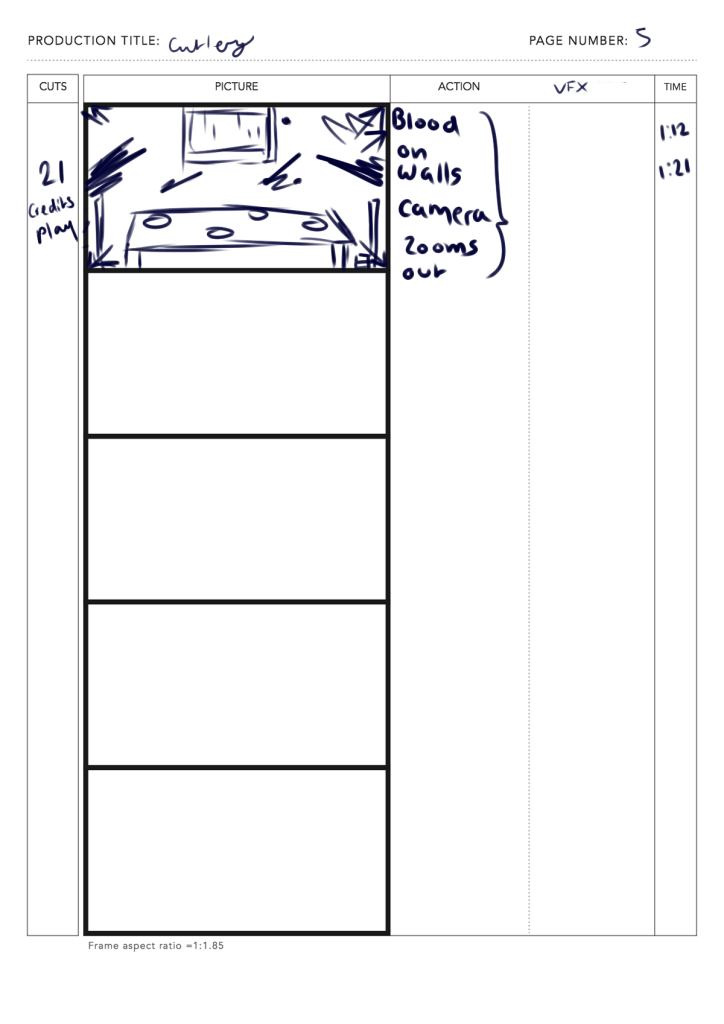

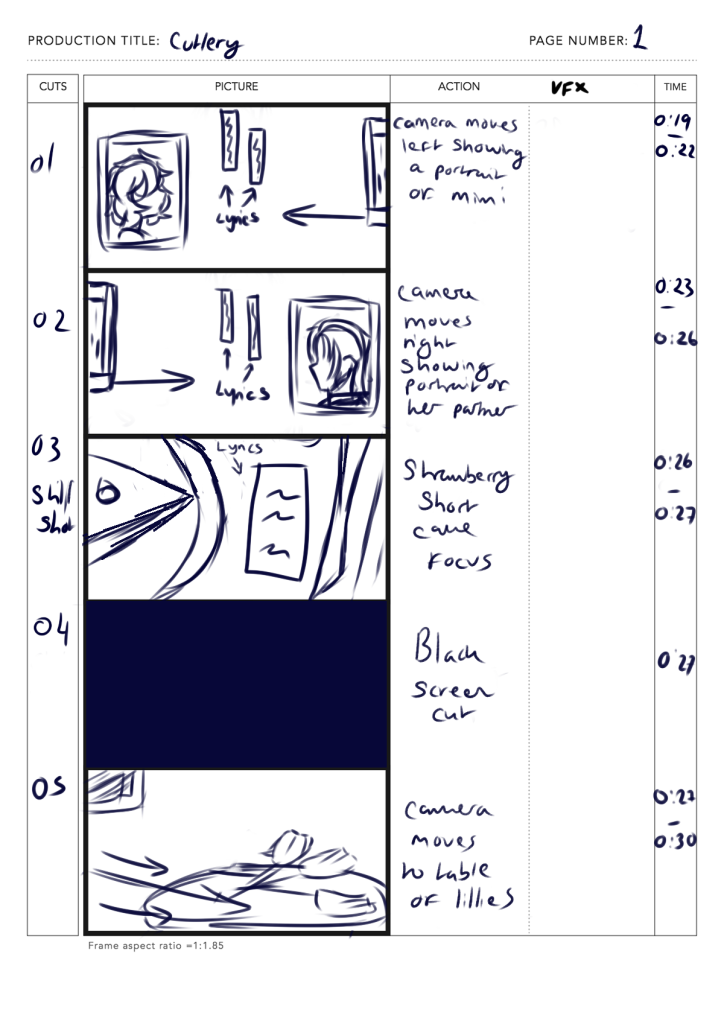

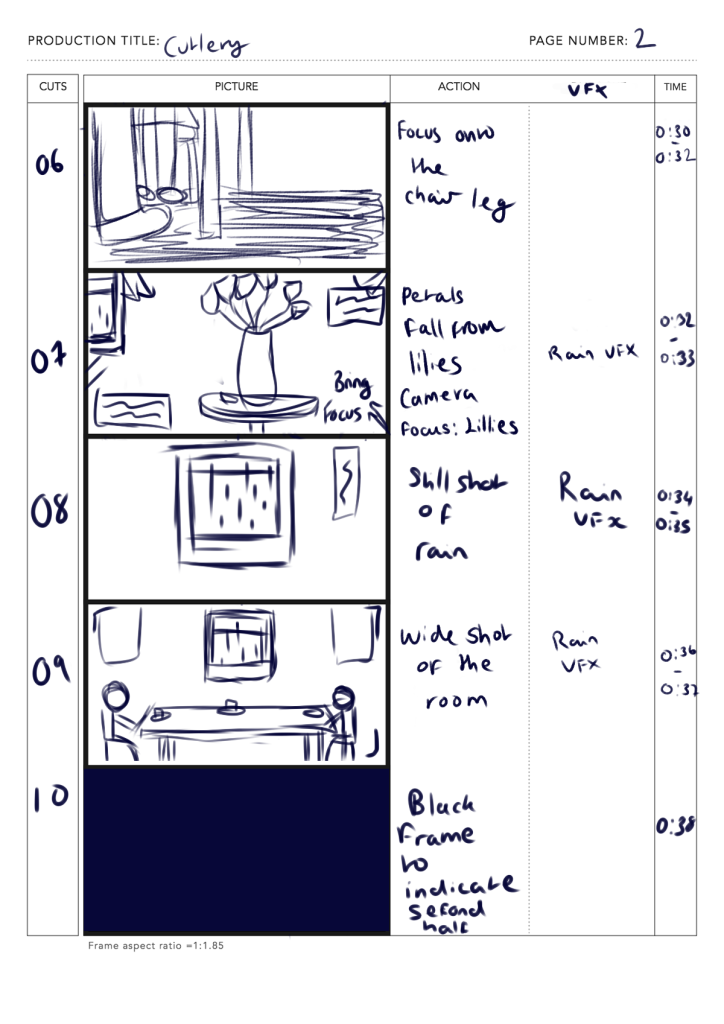

Storyboard Planning

In order for the MV to follow the rhythm, The storyboard generally has a lot of cuts with static or moving shots. The combination of both creates a diverse sequence that keeps the audience engaged. On the other hand, this also allows to exploration in compositional shots.

When finding inspiration, I also used examples from existing 3DMV’s of the same genre.

The original idea was to have the MV solely be 3D with stop motion animation to accompany the slow and melancholic pace of the song. But when exploring Unreal’s animation editor – there was a realisation that rigging different poses for each frame would become time consuming very quickly. Looking back at the examples, The use of camera work in these videos create a fluid and dynamic feel, making the compositions and storytelling feel a lot more engaging with the audience, even with the songs aren’t as upbeat or fast paced.

To counteract the rigging issue, the storyboard was changed to instead focus on the camera shots to simulate that same dynamic movement shown in the examples whilst still maintaining that slow and choppy pace.

To make up for the lack of animation, especially in the characters facial expressions, I decided to mix the 2D and 3D genres together by adding hand drawn frames later on in the post production part of the workflow.

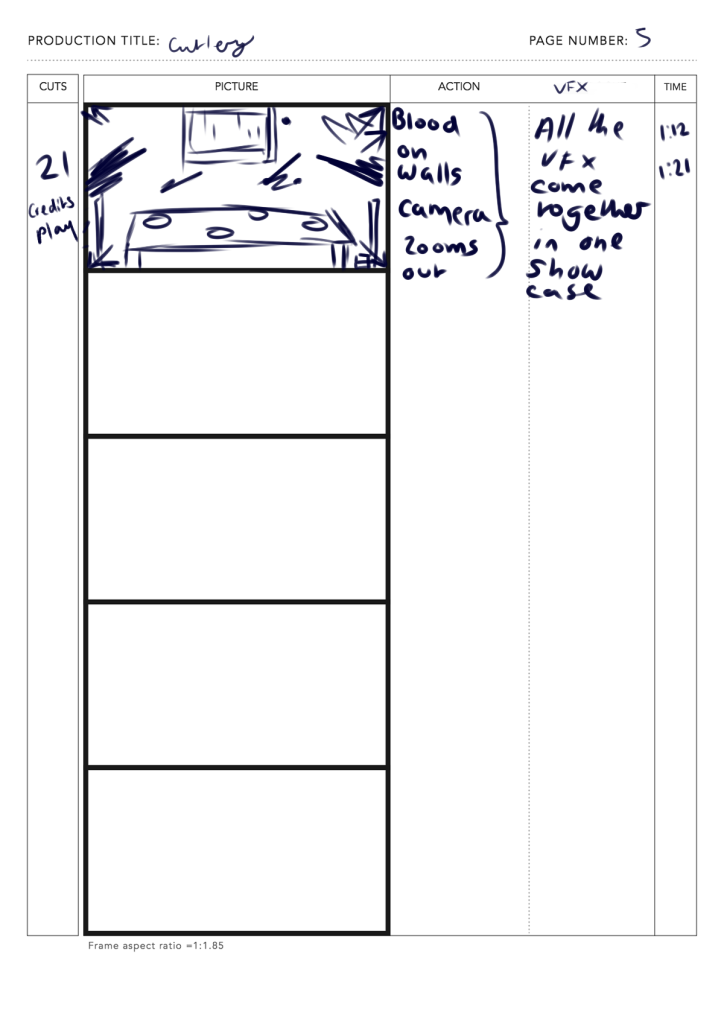

Finally, I made the storyboard frames into an animatic – this process further helps visualise what the music video would look like, especially during the editing process.

VFX planning

For the VFX, I created a list along with the storyboard. I saved the more elaborate ones for the second half of the video.

By having a list, I was also able to discover and learn a variety of different techniques for implementing these effects. This also helps to plan out which VFX to implement throughout the project based on how complex they were. Looking back in retrospective, A lot of time in the project was taken up from learning about Unreal’s Niagra system interface and experimenting different VFX emitters.

Nevertheless, using Niagra has been an enlightening experience as it opened up a lot of room for experimentation. With the storyboard, I was also able to sketch out what some of the effects could’ve possibly looked like. These effects were also inspired by other 2DMVs of the same genre. This is focused more in the VFX blogpost.